Artificial Intelligence: enemy of job security or productivity champion & job enhancer?

“…with the great power of AI comes great responsibility to evolve roles and reskill employees for this brave new world. By implementing AI technologies simply to speed up business processes, companies risk driving away the very people needed to guide the machines and work alongside them to enable future growth.”

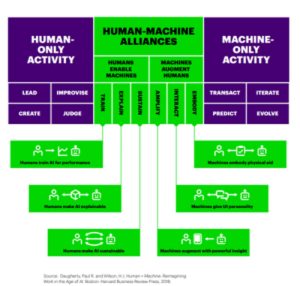

From Daugherty, Paul R & Wilson, H.L Human + Machine Reimagining Work in the Age of AI, published in Boston: Harvard Business Review Process

It’s very difficult to write about Artificial Intelligence (AI) or Machine Learning (ML) without appearing to be a profit of doom and certainly there are quite a few doom merchants linked to this topic out there already. Not least, the Bank of England whose chief economist Andy Haldane recently declared as many as 15m jobs will be made obsolete by AI within the next 35 years.

Haldane said automation posed a risk to almost half those employed in the UK and that this ‘third machine age’ would hollow out the labour market, widening the gap between rich and poor. The results of a Bank of England study, Haldane added, suggested that administrative, clerical and production tasks were most at threat.

If you’ve been sucked into reading these sorts of scare stories, it becomes difficult to get a perspective on the potential upside of AI, so I thought I’d take a closer look at this. Accenture’s Process Reimagined study of 1,000 businesses, as detailed in the above quoted book, provides strong views supporting the flip side of the argument. The book explains essentially that humans are needed to Lead, Improvise, Create and Judge ML outputs; whilst machines are great at transacting, predicting (based on analysis of masses of data), evolving and iterating (through ML). In other words, it becomes possible to get machines to not only speed up business processes but self-heal, self-adapt and self-optimise as they go…. if humans build the algorithms correctly of course.

What this report also indicates is that humans have a key role in making sure algorithmic decision-making is fair, safe and transparent or auditable. In other words, it’s humans that are not only going to need to design to algorithms to deliver reliable efficiencies and insights, but they will also be needed at the other end to sense check what the machines are doing and recommending.

It seems clear to me that we need lots of management-level people defining what work we want the algorithms to find out and what business processes we want them to support. We also need masses of data scientists to keep ML projects on track. What Accenture’s book also emphasises is the importance of using machine learning to augment people’s jobs, taking more of the grunt work out, delivering process efficiencies and providing actionable insights which humans can then use to make their jobs both more productive and interesting. The report makes it clear machine learning adoption needs to be designed to improve employee retention and job satisfaction, not undermine job security and drive talent away.

We certainly need human ingenuity to help machines turn ever larger amount of structured and unstructured data into actionable insights which can help businesses find and engage more prime prospects, sell more, increase wallet share, command greater customer loyalty, etc. etc.

One of the key benefits of ‘early AI’ was seen in ‘Recommender’ algorithms deployed with gusto by Amazon when it came through with the online retail revolution of ‘People who bought this (or what you are perusing) also bought these….’ These recommender algorithms are now in wide use by online retailers and they definitely encourage us to buy more because they enhance the user experience. They are particularly good for selling accessories to a man purchase. However, for AI to work for all organisations selling all manner of products and services, human employees must define the questions which need answering through turning masses of data into insights which are likely to lead to results. This requires leadership, vision, creativity ad judgement. Humans are needed to help steer through tightening personal data privacy laws and ensure machines are not unintentionally misleading customers or mis-selling products to them.

There has been a great deal of talk recently about using AI to create virtual digital assistants or ‘chatbots’ designed to personalise user experience – helping customers answer product or service queries; gather more knowledge about a product; or simply get a status update. The latter is important for financial services where many products (e.g. pensions, life assurance, private medical insurance, home & contents cover, motor insurance etc.) need to be checked for a number of reasons i.e. claims status, cover levels, amounts saved, additional benefits.

Essentially, chatbots are already being designed to reduce the heavy workload of call centres and customer service departments. AI-backed chatbots are being designed to remove the more mundane queries from agents’ workloads; thereby giving call centre agents the more interesting and challenging work of deepening customer engagement, cross-selling complementary services, and the like.

The most public example of a pensions-linked chatbot in the UK was the Pension valuation ‘skill’ which Aviva launched earlier this year for its policy holders having gone public with its development programme for AI-enabled voice interaction device Amazon Echo in early 2017.

It’s even possible with ML to conceive of discovering patterns which can help predict certain incidents which could affect their customer profile (e.g. if someone is requesting a pension valuation for the first time in 20 years, it’s likely they are considering their decumulation options).

The theory is that dialogue between the customer and chatbots can be captured, ingested and added to a company’s customer profile, so that they can build up a richer understanding of your personal circumstances, financial priorities and lifestyle aspirations; using this increased intelligence to develop more personalised contextual engagement; building trust and loyalty without necessarily involving any humans at the provider end during this learning process.

The Bank of America has developed a financial management chatbot called Erica which builds up an understanding of it customers’ financial status and makes change recommendation based on an analysing your money movements. Its transaction searching capability has been particularly popular with customers using Erica. As the platform matures, it’s possible that the chatbot could offer options designed to improve customers’ cashflow management or avoid breaching overdraft levels for example.

In summary businesses launching AI-based services need to:

1. Make sure they know what questions they want to answer.

2. Make sure the algorithms are delivering actionable insights.

3. Start by using AI to improve business processes before going public with customer-facing chatbots and the like.

4. Ensure it’s enhancing your employees’ jobs – not undermining them.

5. Remember what humans offer and what machines do best – both have key roles in making life better for everyone including the customer over time.

Published: 2 October 2018